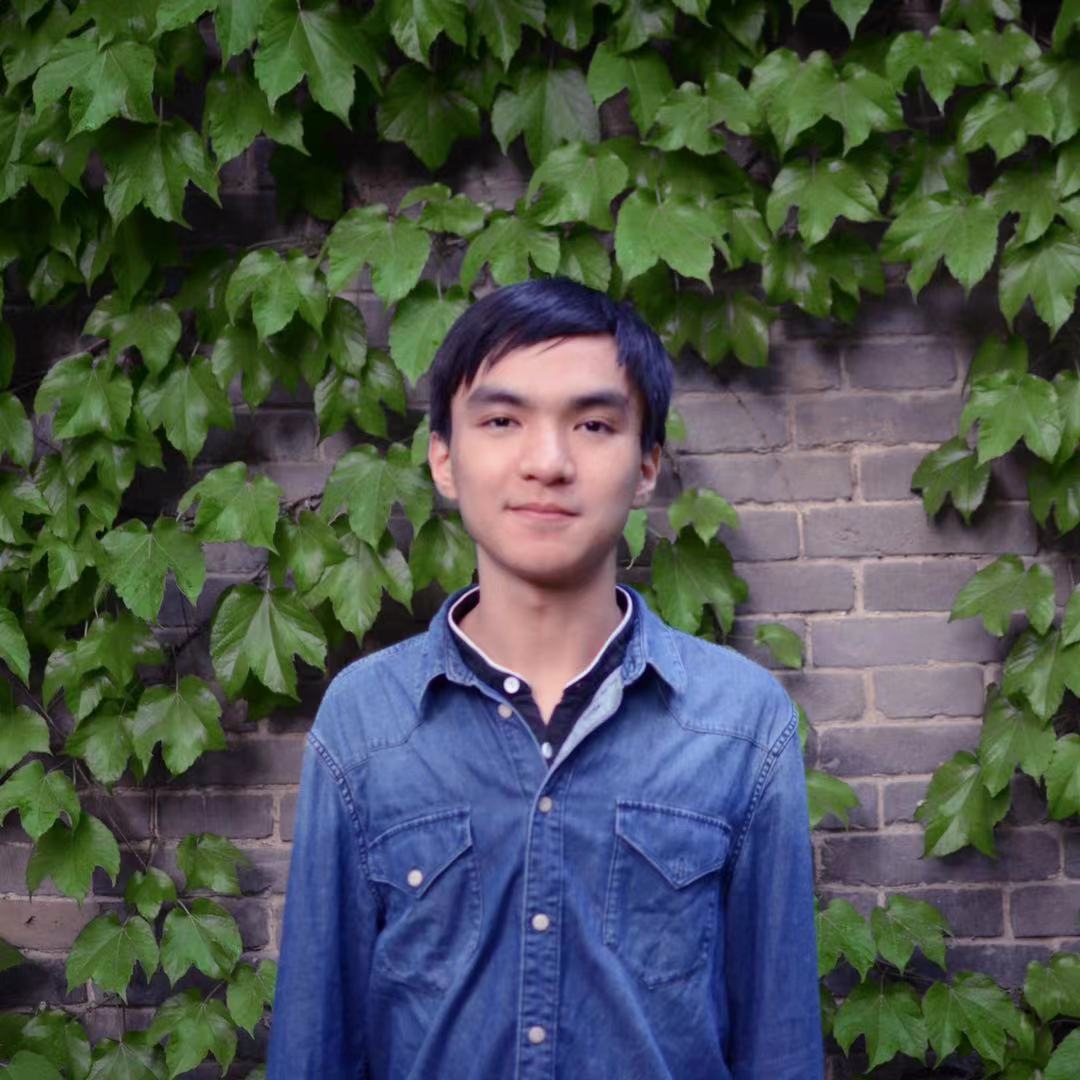

About Me

I am Kaixuan Huang (黄凯旋), a final year Ph.D. student in Electrical and Computer Engineering Department at Princeton University. I am fortunate to be advised by Professor Mengdi Wang. Before that, I received B.S. in Mathematics and B.S. in Computer Science from Peking University. My research is partially supported by Google PHD Fellowship.

My first name Kaixuan is pronounced “Kye Sh-wen” (audio sample), which means triumph.

Contact me via email kaixuanh@princeton.edu; X Account; wechat: [QR Code].

Research Overview

My research focuses on (1) understanding how language models may fail when inputs are out-of-distribution or adversarial, and (2) improving the capabilities and robustness of language models via scalable human/synthetic data pipelines and algorithmic innovations. My recent topics include:

Robustness of RLHF and RLVR:

- The alignment of LLMs can be easily broken by exploiting parameter-efficient finetuning (PEFT) methods. We used prefix tuning methods to construct adversarial prefixes that undo the safety alignment of LLMs [1]. We also developed pruning and low-rank adaptation methods to identify and isolate safety-critical regions of LLMs [2].

- Reasoning models may memorize the problem-solving techniques from the training set and blindly apply them when the input problems are slightly perturbed [3].

Inference-time Algorithms & Agents: Scaling up inference-time compute and augmenting LLMs with tools are principled approaches to boost capabilities and improve robustness.

- To accelerate high-quality data generation, we developed efficient decoding algorithm [4] and inference-time alignment technique [5], borrowing techniques from traditional RL.

- Besides handcrafting agents [6], I have been thinking about distilling agents into foundation models and improving them through Reinforcement Learning.

I am also interested in long-term research that can lead to a paradigm shift for the current AI systems.

News

- 05/2025: I will give a talk at Google DeepMind about MATH-Perturb.

- 05/2025: MATH-Perturb is accepted in ICML 2025.

- 11/2024: Thrilled to receieve Google PHD Fellowship 2024.

- 10/2024: I will give a talk at INFORMS 2024 about CRISPR-GPT.

- 03/2024: I started my internship at Google DeepMind, working with Zheng Wen and Csaba Szepesvari.